Implementation Guide

Airbyte Self-Managed Enterprise is in an early access stage for select priority users. Once you are qualified for a Self-Managed Enterprise license key, you can deploy Airbyte with the following instructions.

Airbyte Self-Managed Enterprise must be deployed using Kubernetes. This is to enable Airbyte's best performance and scale. The core components (api server, scheduler, etc) run as deployments while the scheduler launches connector-related pods on different nodes.

Prerequisites

Infrastructure Prerequisites

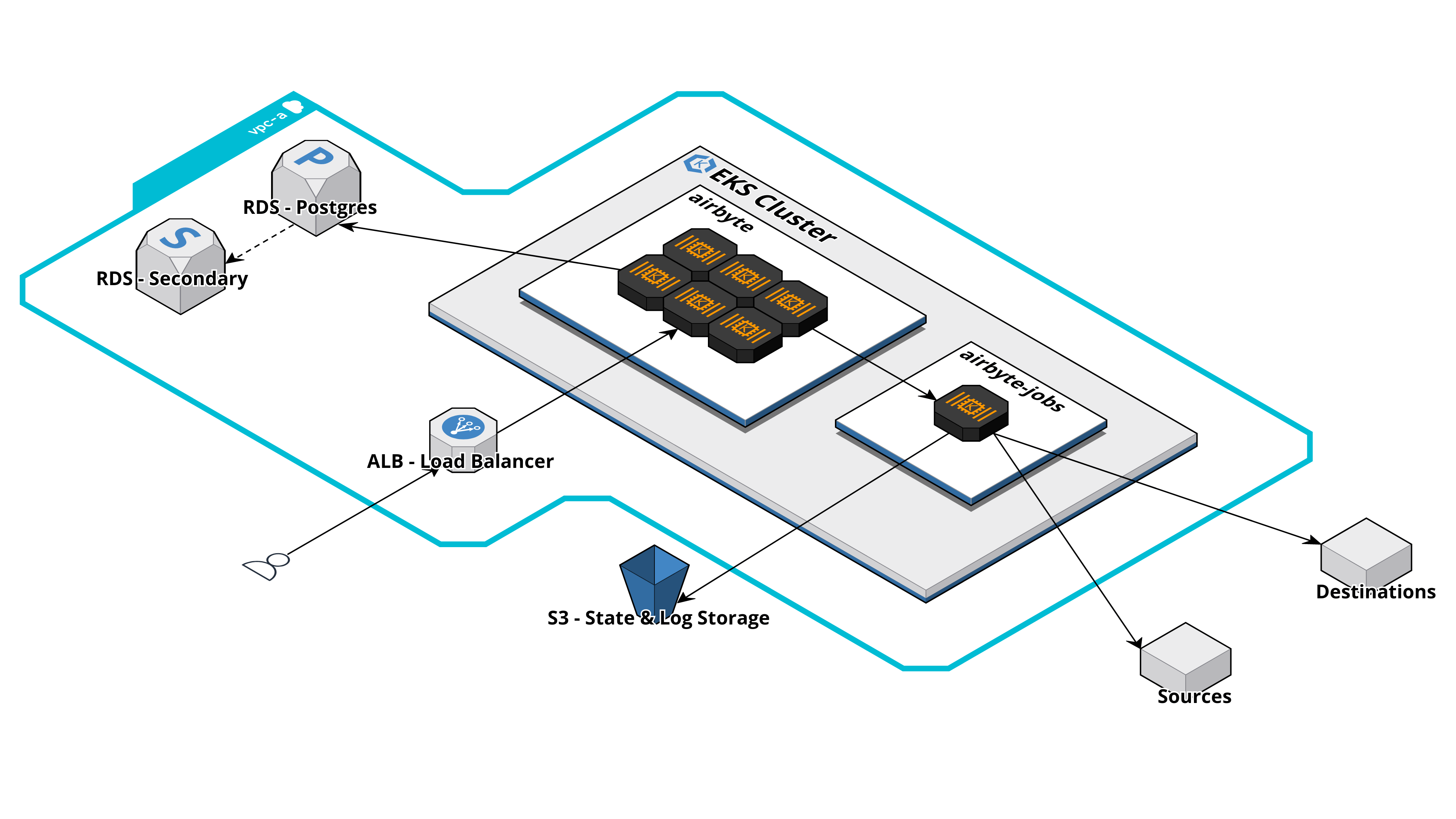

For a production-ready deployment of Self-Managed Enterprise, various infrastructure components are required. We recommend deploying to Amazon EKS or Google Kubernetes Engine. The following diagram illustrates a typical Airbyte deployment running on AWS:

Prior to deploying Self-Managed Enterprise, we recommend having each of the following infrastructure components ready to go. When possible, it's easiest to have all components running in the same VPC. The provided recommendations are for customers deploying to AWS:

| Component | Recommendation |

|---|---|

| Kubernetes Cluster | Amazon EKS cluster running in 2 or more availability zones on a minimum of 6 nodes. |

| Ingress | Amazon ALB and a URL for users to access the Airbyte UI or make API requests. |

| Object Storage | Amazon S3 bucket with two directories for log and state storage. |

| Dedicated Database | Amazon RDS Postgres with at least one read replica. |

| External Secrets Manager | Amazon Secrets Manager for storing connector secrets. |

We require you to install and configure the following Kubernetes tooling:

- Install

helmby following these instructions - Install

kubectlby following these instructions. - Configure

kubectlto connect to your cluster by usingkubectl use-context my-cluster-name:

Configure kubectl to connect to your cluster

- Amazon EKS

- GKE

- Configure your AWS CLI to connect to your project.

- Install eksctl.

- Run

eksctl utils write-kubeconfig --cluster=$CLUSTER_NAMEto make the context available to kubectl. - Use

kubectl config get-contextsto show the available contexts. - Run

kubectl config use-context $EKS_CONTEXTto access the cluster with kubectl.

- Configure

gcloudwithgcloud auth login. - On the Google Cloud Console, the cluster page will have a "Connect" button, with a command to run locally:

gcloud container clusters get-credentials $CLUSTER_NAME --zone $ZONE_NAME --project $PROJECT_NAME. - Use

kubectl config get-contextsto show the available contexts. - Run

kubectl config use-context $EKS_CONTEXTto access the cluster with kubectl.

We also require you to create a Kubernetes namespace for your Airbyte deployment:

kubectl create namespace airbyte

Installation Steps

Step 1: Add Airbyte Helm Repository

Follow these instructions to add the Airbyte helm repository:

- Run

helm repo add airbyte https://airbytehq.github.io/helm-charts, whereairbyteis the name of the repository that will be indexed locally. - Perform the repo indexing process, and ensure your helm repository is up-to-date by running

helm repo update. - You can then browse all charts uploaded to your repository by running

helm search repo airbyte.

Step 2: Create your Helm Values File

-

Create a new

airbytedirectory. Inside, create an emptyairbyte.ymlfile. -

Paste the following into your newly created

airbyte.ymlfile. This is the minimal values file to be used to deploy Self-Managed Enterprise.

Template airbyte.yml file

webapp-url: # example: http://localhost:8080

initial-user:

email:

first-name:

last-name:

username: # your existing Airbyte instance username

password: # your existing Airbyte instance password

license-key:

# Enables Self-Managed Enterprise.

# Do not make modifications to this section.

global:

edition: "pro"

keycloak:

enabled: true

bypassInit: false

keycloak-setup:

enabled: true

server:

env_vars:

API_AUTHORIZATION_ENABLED: "true"

Step 3: Configure your Deployment

Configure User Authentication

-

Fill in the contents of the

initial-userblock. The credentials grant an initial user with admin permissions. You should store these credentials in a secure location. -

Add your Airbyte Self-Managed Enterprise license key to your

airbyte.ymlin thelicense-keyfield. -

To enable SSO authentication, add SSO auth details to your

airbyte.ymlfile.

Configuring auth in your airbyte.yml file

- Okta

- Other

To configure SSO with Okta, add the following at the end of your airbyte.yml file:

auth:

identity-providers:

- type: okta

domain: $OKTA_DOMAIN

app-name: $OKTA_APP_INTEGRATION_NAME

client-id: $OKTA_CLIENT_ID

client-secret: $OKTA_CLIENT_SECRET

See the following guide on how to collect this information for Okta.

To configure SSO with any identity provider via OpenID Connect (OIDC), such as Azure Entra ID (formerly ActiveDirectory), add the following at the end of your airbyte.yml file:

auth:

identity-providers:

- type: oidc

domain: $DOMAIN

app-name: $APP_INTEGRATION_NAME

client-id: $CLIENT_ID

client-secret: $CLIENT_SECRET

See the following guide on how to collect this information for Azure Entra ID (formerly ActiveDirectory).

To modify auth configurations on an existing deployment (after Airbyte has been installed at least once), you will need to helm upgrade Airbyte with the additional environment variable --set keycloak-setup.env_vars.KEYCLOAK_RESET_REALM=true. As this also resets the list of Airbyte users and permissions, please use this with caution.

To deploy Self-Managed Enterprise without SSO, exclude the entire auth: section from your airbyte.yml config file. You will authenticate with the instance admin user and password included in your airbyte.yml. Without SSO, you cannot currently have unique logins for multiple users.

Configuring the Airbyte Database

For Self-Managed Enterprise deployments, we recommend using a dedicated database instance for better reliability, and backups (such as AWS RDS or GCP Cloud SQL) instead of the default internal Postgres database (airbyte/db) that Airbyte spins up within the Kubernetes cluster.

We assume in the following that you've already configured a Postgres instance:

External database setup steps

- Add external database details to your

airbyte.ymlfile. This disables the default internal Postgres database (airbyte/db), and configures the external Postgres database:

postgresql:

enabled: false

externalDatabase:

host: ## Database host

user: ## Non-root username for the Airbyte database

database: db-airbyte ## Database name

port: 5432 ## Database port number

- For the non-root user's password which has database access, you may use

password,existingSecretorjdbcUrl. We recommend usingexistingSecret, or injecting sensitive fields from your own external secret store. Each of these parameters is mutually exclusive:

postgresql:

enabled: false

externalDatabase:

...

password: ## Password for non-root database user

existingSecret: ## The name of an existing Kubernetes secret containing the password.

existingSecretPasswordKey: ## The Kubernetes secret key containing the password.

jdbcUrl: "jdbc:postgresql://<user>:<password>@localhost:5432/db-airbyte" ## Full database JDBC URL. You can also add additional arguments.

The optional jdbcUrl field should be entered in the following format: jdbc:postgresql://localhost:5432/db-airbyte. We recommend against using this unless you need to add additional extra arguments can be passed to the JDBC driver at this time (e.g. to handle SSL).

Configuring External Logging

For Self-Managed Enterprise deployments, we recommend spinning up standalone log storage for additional reliability using tools such as S3 and GCS instead of against using the defaul internal Minio storage (airbyte/minio). It's then a common practice to configure additional log forwarding from external log storage into your observability tool.

External log storage setup steps

To do this, add external log storage details to your airbyte.yml file. This disables the default internal Minio instance (airbyte/minio), and configures the external log database:

- S3

- GCS

minio:

enabled: false

global:

storage:

type: "S3"

bucket: ## S3 bucket names that you've created. We recommend storing the following all in one bucket.

log: airbyte-bucket

state: airbyte-bucket

workloadOutput: airbyte-bucket

s3:

region: "" ## e.g. us-east-1

accessKeyExistingSecret: ## The name of an existing Kubernetes secret containing the AWS Access Key.

accessKeyExistingSecretKey: ## The Kubernetes secret key containing the AWS Access Key.

secretKeyExistingSecret: ## The name of an existing Kubernetes secret containing the AWS Secret Access Key.

secretKeyExistingSecretKey: ## The name of an existing Kubernetes secret containing the AWS Secret Access Key.

Then, ensure your access key is tied to an IAM user with the following policies, allowing the user access to S3 storage:

{

"Version":"2012-10-17",

"Statement":[

{

"Effect":"Allow",

"Action": "s3:ListAllMyBuckets",

"Resource":"*"

},

{

"Effect":"Allow",

"Action":["s3:ListBucket","s3:GetBucketLocation"],

"Resource":"arn:aws:s3:::YOUR-S3-BUCKET-NAME"

},

{

"Effect":"Allow",

"Action":[

"s3:PutObject",

"s3:PutObjectAcl",

"s3:GetObject",

"s3:GetObjectAcl",

"s3:DeleteObject"

],

"Resource":"arn:aws:s3:::YOUR-S3-BUCKET-NAME/*"

}

]

}

minio:

enabled: false

global:

storage:

type: "GCS"

bucket: ## GCS bucket names that you've created. We recommend storing the following all in one bucket.

log: airbyte-bucket

state: airbyte-bucket

workloadOutput: airbyte-bucket

gcs:

credentials: ""

credentialsJson: "" ## Base64 encoded json GCP credentials file contents.

Note that the credentials and credentialsJson fields are mutually exclusive.

Configuring Ingress

To access the Airbyte UI, you will need to manually attach an ingress configuration to your deployment. The following is a skimmed down definition of an ingress resource you could use for Self-Managed Enterprise:

Ingress configuration setup steps

- Generic

- Amazon ALB

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: # ingress name, example: enterprise-demo

annotations:

ingress.kubernetes.io/ssl-redirect: "false"

spec:

rules:

- host: # host, example: enterprise-demo.airbyte.com

http:

paths:

- backend:

service:

# format is ${RELEASE_NAME}-airbyte-webapp-svc

name: airbyte-pro-airbyte-webapp-svc

port:

number: # service port, example: 8080

path: /

pathType: Prefix

- backend:

service:

# format is ${RELEASE_NAME}-airbyte-keycloak-svc

name: airbyte-pro-airbyte-keycloak-svc

port:

number: # service port, example: 8180

path: /auth

pathType: Prefix

- backend:

service:

# format is ${RELEASE_NAME}-airbyte-api-server-svc

name: airbyte-pro-airbyte-api-server-svc

port:

number: # service port, example: 8180

path: /v1

pathType: Prefix

If you are intending on using Amazon Application Load Balancer (ALB) for ingress, this ingress definition will be close to what's needed to get up and running:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: <INGRESS_NAME>

annotations:

# Specifies that the Ingress should use an AWS ALB.

kubernetes.io/ingress.class: "alb"

# Redirects HTTP traffic to HTTPS.

ingress.kubernetes.io/ssl-redirect: "true"

# Creates an internal ALB, which is only accessible within your VPC or through a VPN.

alb.ingress.kubernetes.io/scheme: internal

# Specifies the ARN of the SSL certificate managed by AWS ACM, essential for HTTPS.

alb.ingress.kubernetes.io/certificate-arn: arn:aws:acm:us-east-x:xxxxxxxxx:certificate/xxxxxxxxx-xxxxx-xxxx-xxxx-xxxxxxxxxxx

# Sets the idle timeout value for the ALB.

alb.ingress.kubernetes.io/load-balancer-attributes: idle_timeout.timeout_seconds=30

# [If Applicable] Specifies the VPC subnets and security groups for the ALB

# alb.ingress.kubernetes.io/subnets: '' e.g. 'subnet-12345, subnet-67890'

# alb.ingress.kubernetes.io/security-groups: <SECURITY_GROUP>

spec:

rules:

- host: <WEBAPP_URL> e.g. enterprise-demo.airbyte.com

http:

paths:

- backend:

service:

name: airbyte-pro-airbyte-webapp-svc

port:

number: 80

path: /

pathType: Prefix

- backend:

service:

name: airbyte-pro-airbyte-keycloak-svc

port:

number: 8180

path: /auth

pathType: Prefix

- backend:

service:

# format is ${RELEASE_NAME}-airbyte-api-server-svc

name: airbyte-pro-airbyte-api-server-svc

port:

number: # service port, example: 8180

path: /v1

pathType: Prefix

The ALB controller will use a ServiceAccount that requires the following IAM policy to be attached.

Once this is complete, ensure that the value of the webapp-url field in your airbyte.yml is configured to match the ingress URL.

You may configure ingress using a load balancer or an API Gateway. We do not currently support most service meshes (such as Istio). If you are having networking issues after fully deploying Airbyte, please verify that firewalls or lacking permissions are not interfering with pod-pod communication. Please also verify that deployed pods have the right permissions to make requests to your external database.

Step 4: Deploy Self-Managed Enterprise

Install Airbyte Self-Managed Enterprise on helm using the following command:

helm install \

--namespace airbyte \

"airbyte-enterprise" \

"airbyte/airbyte" \

--set-file airbyteYml="./airbyte.yml"

The default release name is airbyte-enterprise. You can change this by modifying the above helm upgrade command.

Updating Self-Managed Enterprise

Upgrade Airbyte Self-Managed Enterprise by:

- Running

helm repo update. This pulls an up-to-date version of our helm charts, which is tied to a version of the Airbyte platform. - Re-installing Airbyte Self-Managed Enterprise:

helm upgrade \

--namespace airbyte \

--install "airbyte-enterprise" \

"airbyte/airbyte" \

--set-file airbyteYml="./airbyte.yml"

Customizing your Deployment

In order to customize your deployment, you need to create an additional values.yaml file in your airbyte directory, and populate it with configuration override values. A thorough values.yaml example including many configurations can be located in charts/airbyte folder of the Airbyte repository.

After specifying your own configuration, run the following command:

helm upgrade \

--namespace airbyte \

--install "airbyte-enterprise" \

"airbyte/airbyte" \

--set-file airbyteYml="./airbyte.yml" \

--values path/to/values.yaml

Customizing your Service Account

You may choose to use your own service account instead of the Airbyte default, airbyte-sa. This may allow for better audit trails and resource management specific to your organizational policies and requirements.

To do this, add the following to your airbyte.yml:

serviceAccount:

name: